Launching an artificial intelligence powered virtual assistant for Commerce Center reporting

30 October 2025

In the rapidly evolving payments space, accurate and timely reporting is crucial. To address this, J.P. Morgan Payments has developed a client-facing virtual assistant powered by Generative Artificial Intelligence (GenAI). The virtual assistant will be available to clients 24/7 for all reporting queries as users are introduced to Commerce Center. The Large Language Model (LLM) is built so clients can quickly ask questions like “how do I create a new report” or “which reports will show me post-funded data?”

That same capability powers intelligent chatbots, enabling them to move beyond rigid, scripted flows. They understand complex language, capture user intent, and respond with context-aware answers that feel natural and engaging. In short, they deliver the kind of human-like experience clients now expect.

Building and testing for successful self-service

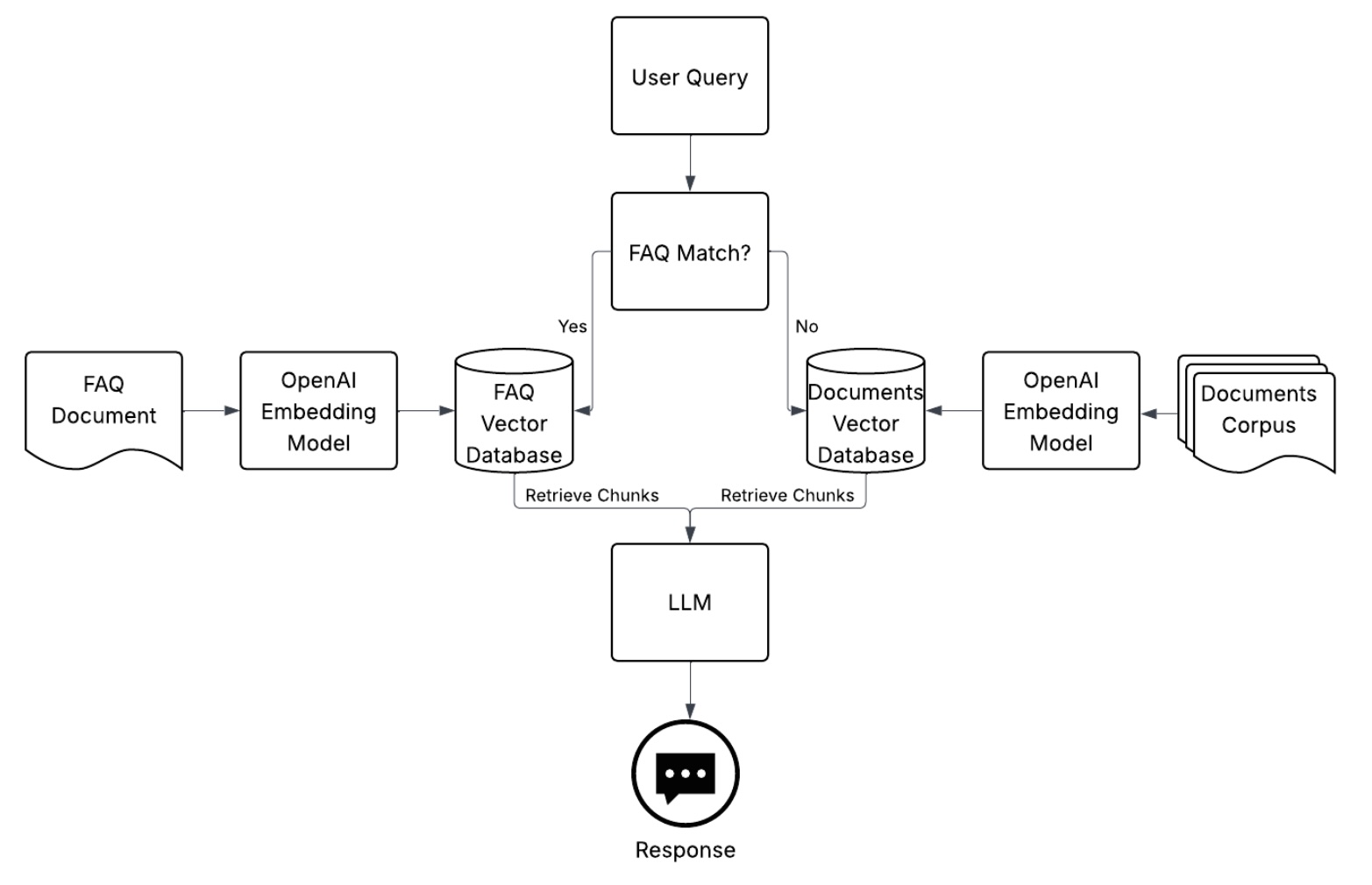

Launching any–not to mention, the first–client-facing, AI-powered virtual assistant at J.P. Morgan Payments was not an easy lift. We first chose a model that offered a direct API connection with the most autonomy over workflow orchestration. We then employed the Retrieval-Augmented Generation (RAG) framework so that relevant information retrieved by the model was fed into generative models. These models act as writers, transforming the retrieved information into coherent textual responses to user queries. Using RAG and the new platform's reporting support materials, the application was designed to assist clients in transitioning from legacy to new reporting.

To test the model and prompts we programmed, we integrated with a test suite that enabled tracing – to identify the root-cause of incorrect responses, as well as LLM-as-a-judge evaluations of correctness, completeness, and hallucination. With iterative testing and evaluations, we were able to quickly see the positive impacts to output correctness through prompt refinement, retrieval tuning, and vector store expansion.

The model’s vector stores were expanded to account for questions that the LLM initially did not find in relevant inputs which, in turn, improved the accuracy of the virtual assistant’s responses.

Beyond development, we worked with content and UX teams to build an interface that clients would find easy to use. Testing with clients influenced UI designs, while content teams added to the model’s prompts to build a polite and concise virtual assistant.

Try it out

If you are enabled in Commerce Center, log in and consult the virtual assistant in the reports tab to help simplify your transition from legacy reporting and seamlessly navigate through the new reporting platform.